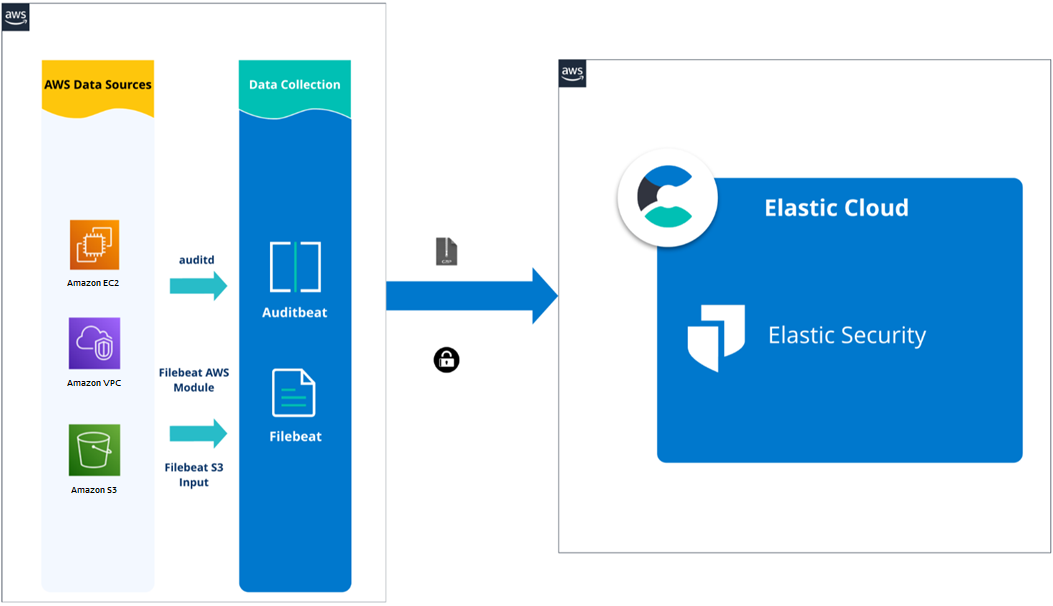

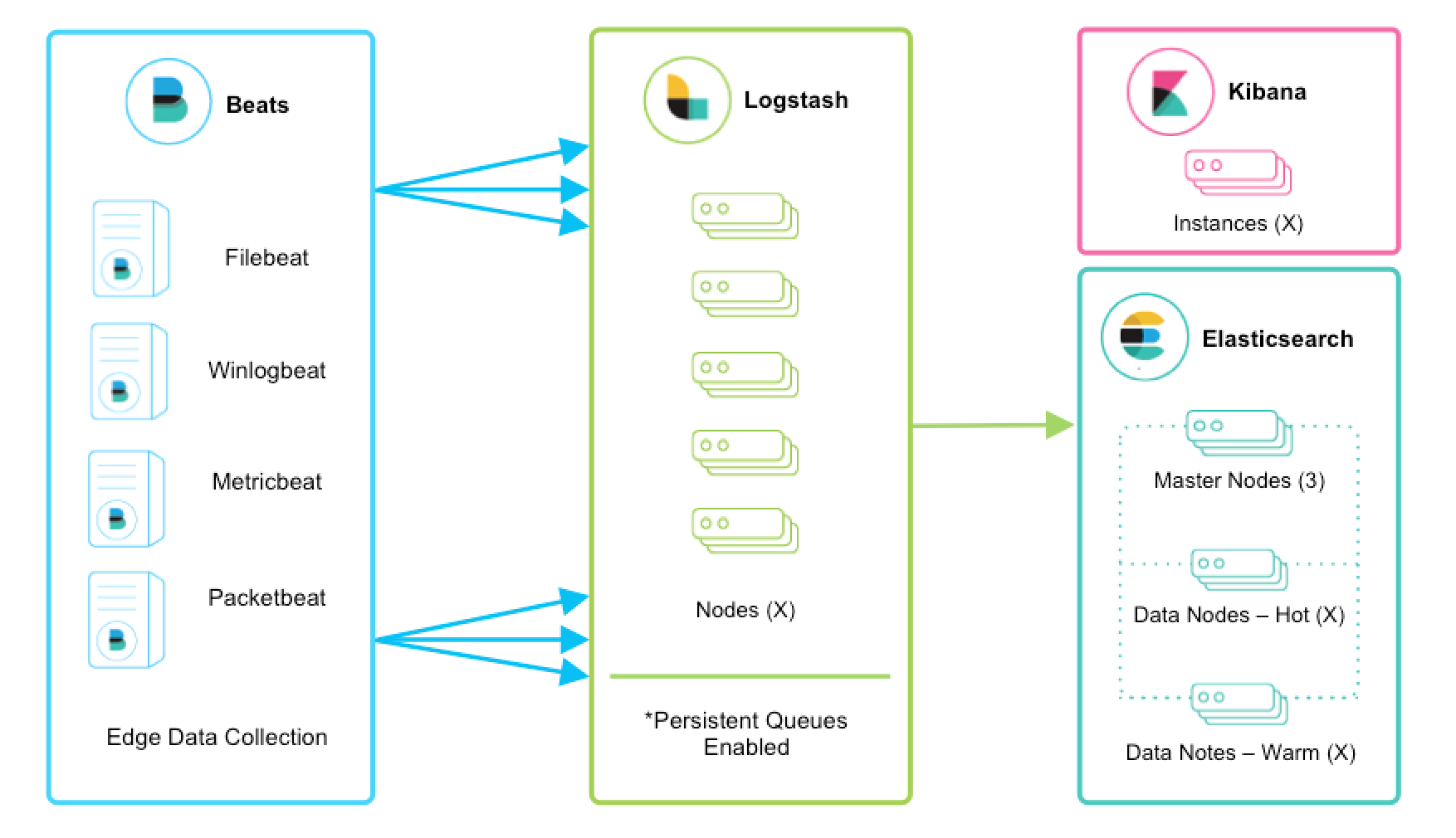

It would certainly be easier to use filebeat when just getting startedĪnother issue is that you must run logstash on each application instance plus the ones you need for ingestion into elasticsearch. The json-file supported by file beat would work out of the box here. One big downside: you cannot use docker logs for quick inspection anymore because aws doesn't offer dual logger output. Major downsite: There is a race-condition with this approach where you will lose the initial logs from containers that start before the logstash container. And then push the logs to s3 via logstash output. The only alternative is rather complicated - you have to configure the aws ECS agent to support gelf logging and then use gelf logging to logstash. I can imagine 100 other usecases but this one is the current one that would have simplified my life. filebeat ben. When using a service like aws elastic beanstalk, it is very handy to push logs to s3 for persistence. My values.It would be very helpful to allow filebeat to output to s3 directly.Ĭurrently, if one wants to store logs on s3, logstash is required. We've created a custom container image named es:latest that has our plugin installed Removing intermediate container 3828eb6c07b7Įs latest 86015a112bfe 3 seconds ago 618MB > Please restart Elasticsearch to activate any plugins installed * es.allow_insecure_settings read,writeįor descriptions of what these permissions allow and the associated risks. > Downloading repository-s3 from WARNING: plugin requires additional permissions accessDeclaredMembers Step 2/2 : RUN bin/elasticsearch-plugin install -batch repository-s3 Step 1/2 : FROM /elasticsearch/elasticsearch:7.17.0 This will be very useful especially for users who dont need near real-time log message collection. Some users only want a simple polling method applied on the S3 bucket periodically without SQS, similar to Logstash s3 input. Sending build context to Docker daemon 2.048kB Current S3 input in Filebeat relies on SQS to send notification when a new log message is stored in S3 bucket. s3-friend-zip-topic file.uriss3a://my-bucket/f.zip. RUN bin/elasticsearch-plugin install -batch docker build -t es. Set up Log Viewing with Elasticsearch, Kibana and Filebeat Troubleshooting.

> RUN bin/elasticsearch-plugin install -batch repository-s3įROM /elasticsearch/elasticsearch:7.17.0 Now goto Stack Management -> Snapshot and Restore -> Repositories - > minio -> verify repositoryĪwesome! lets create a policy and take a snapshotĪnd we have snapshots!! Lets do this in HELM as wellĬreate our secret $ kubectl create secret generic s3-creds -from-literal=s3._key='minioadmin' -from-literal=s3._key='minioadmin'Ĭreate my local container image with the plugin installed - my environment is in minikube so I will need to minikube ssh to build the image $ minikube mkdir cd cat > Dockerfile FROM /elasticsearch/elasticsearch:7.17.0 Log into kibana and goto devtools and put in PUT _snapshot/minio $ kubectl get secrets s3-creds -o go-template='' We can check for our secret by : $ kubectl describe secrets s3-creds

S3._key: bWluaW9hZG1pbg=Īlternatively, you can even use stringData $ cat s3.yaml The most simple way is to do it literally $ kubectl create secret generic s3-creds -from-literal=s3._key='minioadmin' -from-literal=s3._key='minioadmin'Īlternatively, you can create yaml files for this and apply it $ cat s3.yaml We can create kubernetes secrets in many many ways. Instead of getting mc I am just going to browse to my minio GUI and create a bucket $ mc alias set myminio minioadmin minioadmin This is a very simple, not secure setup just for testing $ mkdir data

Configure my elasticsearch pod with initContainer to install the repository-s3 plugin and secureSettings to create the keystore. Create kubernetes secrets for the s3._key and s3._key. For this example I will stand up a very simple minio server on my localhost.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed